Why Your Best People Are About to Leave

The harder you grip, the more you lose.

Here's the pattern I keep seeing in organizations that restrict AI tools:

They're not preventing the risk they fear. They're manufacturing it.

I was talking to Magnus Paues recently on Thinkroom Podcast. He runs Sweden's largest AI podcast, tests dozens of tools every month, and consults with mid-sized companies across Europe. He described a conversation with an procurment director at a German-owned firm. Strict rules. No AI tools allowed. The employees sat in what Magnus called "a little bubble" while the technology revolution bloomed around them. Not allowed to touch it.

Magnus's diagnosis was blunt: "The first ones to quit will be the high performers. They're the ones who feel fastest that this straitjacket isn't good enough for their career development."

He's right. But not for the reason you might think.

The Self-Fulfilling Prophecy

The logic seems airtight. AI tools pose security risks.

Employees might leak sensitive data. Compliance is uncertain.

So you restrict access. Problem solved.

Except it isn't.

A recent Gartner survey found that 69% of employees admitted to bypassing their organization's cybersecurity rules in the past year. Nearly three-quarters said they'd do it again if it helped them hit a business goal. In the context of generative AI specifically, a Cisco study revealed that 60% of professionals have pasted internal company information into AI tools despite official prohibitions. Including security staff. Including privacy staff. Nearly a third entered customer data.

Read that again. The people you trust to enforce the rules are breaking them.

This is what happens when you treat restriction as strategy. You don't stop the behavior. You drive it underground. The employee who would have used AI responsibly with proper guardrails is now using it anyway, on their personal device, with zero oversight. You've traded visible, manageable risk for invisible, uncontrollable risk.

The management theorist Bjarte Bogsnes put it perfectly: "When an entire management model reeks of mistrust and control mechanisms, the result might be more, not less, of what we try to prevent."

You signal you don't trust your people. They respond by becoming untrustworthy. Congratulations.

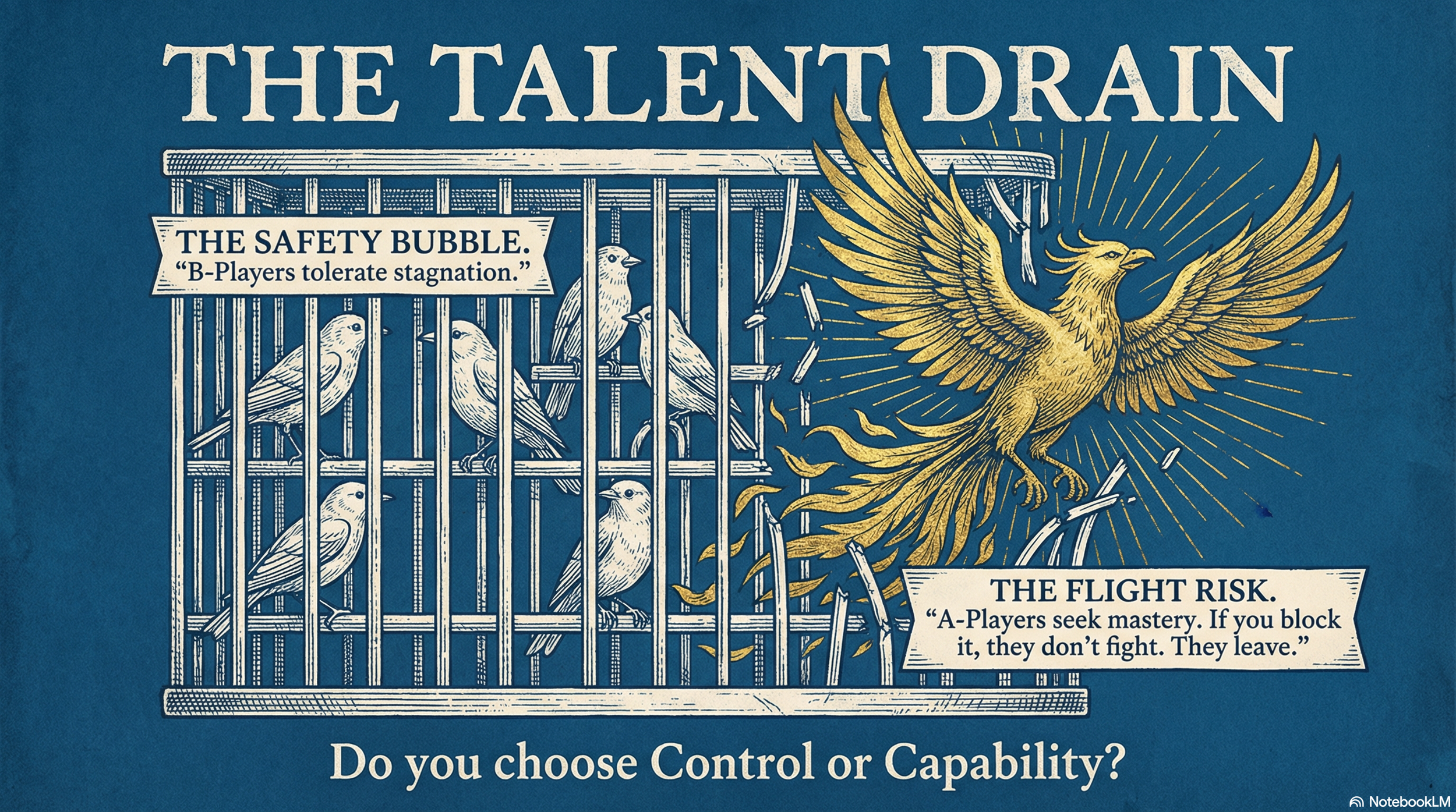

Why A-Players Leave First

Here's what makes this worse. The employees most likely to bypass your restrictions are also the ones most likely to use AI responsibly if you let them. And they're the first to leave when you don't.

High performers have options. Their skills are portable. Recruiters are calling. Former colleagues are starting companies. The barrier to exit is low. When they sense an organization that treats them like potential criminals rather than trusted professionals, they don't fight it. They just leave.

Research on what drives top talent out is consistent: it's rarely money. It's autonomy, mastery, and the ability to do meaningful work. Block those and commitment erodes fast. One study found that high performers were far more likely than average performers to cite lack of advancement opportunities as a reason to leave. B-players tolerate stagnation. A-players don't.

Magnus described it viscerally: "Put me in a chair at a big company and tell me I get to watch this technology revolution blooming all around me, but I'm sitting in a little bubble and I'm not allowed to do anything? I would quit on the spot."

Most A-players won't quit on the spot. They'll start looking quietly. By the time you notice, they've already decided.

And here's what makes this systemic: saying no carries no penalty. Block something that could have transformed the business? Nobody notices. Approve something that goes sideways? Career risk. So the incentive structure rewards restriction. A thousand small, sensible "no's" — each one defensible — and you drift toward irrelevance while feeling responsible.

The Actual Choice

I'm not arguing for recklessness. Real security matters. Guardrails matter. But there's a difference between managing risk and performing risk management.

Security theater is what Bruce Schneier calls it. Controls that give the appearance of safety while not actually improving it. Making life painful for the innocent while sophisticated threats route around easily.

The question isn't whether to have policies. It's whether your policies reflect trust or mistrust. Whether they enable responsible use or drive irresponsible workarounds. Whether you're building an organization that attracts A-players or repels them.

Your best people can sense the difference. They're reading the signals right now.

The irony is that the companies gripping tightest are the ones losing the most. Losing talent. Losing innovation. Losing the very capabilities they'll need to compete.

The choice isn't between control and chaos. It's between the illusion of control that creates real chaos, and the disciplined trust that creates real capability.

Your best people are watching which one you choose.