The Capability Paradox

The better AI gets at your job, the worse you get at it.

An article inspired by a podcast conversation with Bryan Reimer.

Here's the uncomfortable question I keep circling back to: Would I be as effective in my role today if AI disappeared tomorrow?

I've spent two years building AI into everything I do. Research that took days now takes 45 minutes. Content I couldn't afford to produce alone now flows constantly. Strategic frameworks get stress-tested against perspectives I'd never have accessed.

But something else happened too. Something I didn't notice until Bryan Reimer named it.

Bryan has spent 25 years at MIT studying what happens when humans and machines work together. His research spans self-driving cars, aviation, nuclear plants. Different industries, same pattern every time: the more you automate, the less capable you become at supporting that automation.

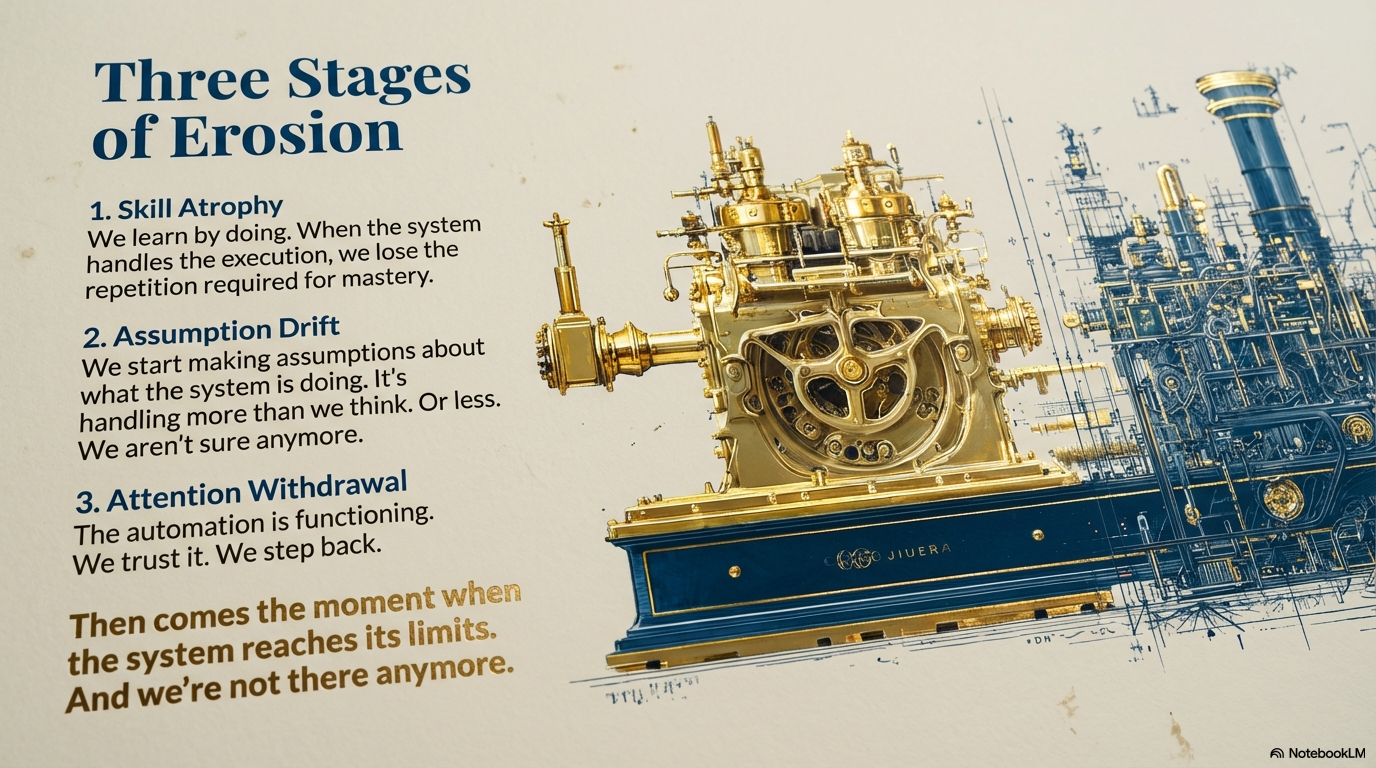

Three things happen. First, your skills erode. We learn by doing. When the machine does for us, we stop flexing the cognitive muscles that built our expertise. Second, we start making assumptions about what the system is doing. It's handling more than we think. Or less. We're not sure anymore. Third, our attention wanders. The automation is functioning. We trust it. We step back.

Then comes the moment when the system reaches its limits. And we're not there anymore.

The Wet Computer Problem

The neuroscience is straightforward. Your brain is plastic, not elastic. It doesn't snap back to previous capability. It holds the shape of whatever you've been practicing.

Studies on chatbot usage show measurable drops in neural activity during delegated tasks. Not because people are being lazy. Because the brain is efficient. Why maintain circuitry you're not using?

This is the same mechanism that makes deep practice valuable. Repeated engagement with difficulty builds neural architecture. Remove the difficulty, remove the building.

Bryan put it simply: "We tend to be lazy. The average individual would love to rest, watch TV, sit in a chair. So we need to think about guardrails so we don't become over-reliant on automation."

He's not being cynical. He's describing biology.

Two Populations Are Forming

Here's where it gets interesting for anyone running a team.

Same tool. Same organization. Completely different outcomes depending on how people use it.

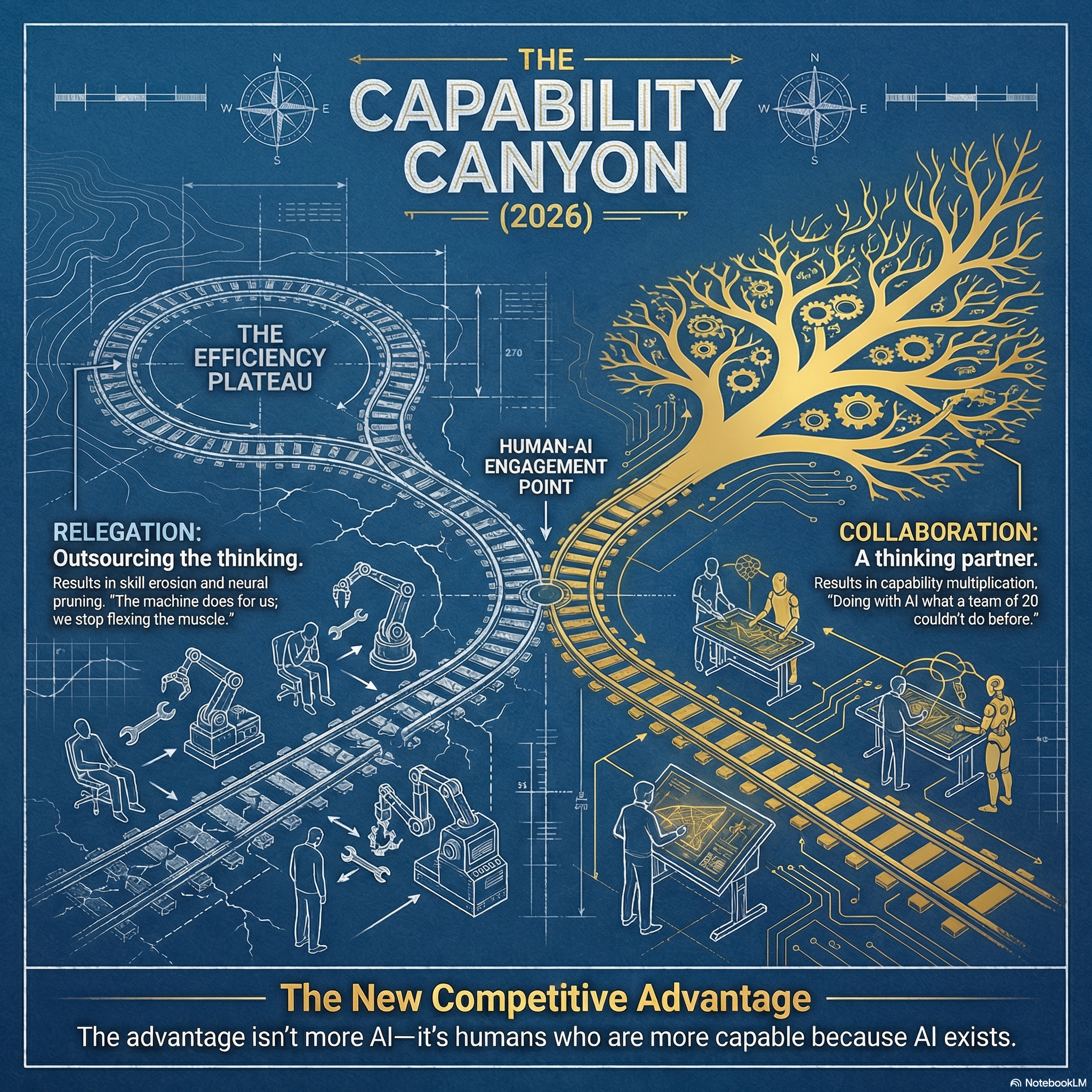

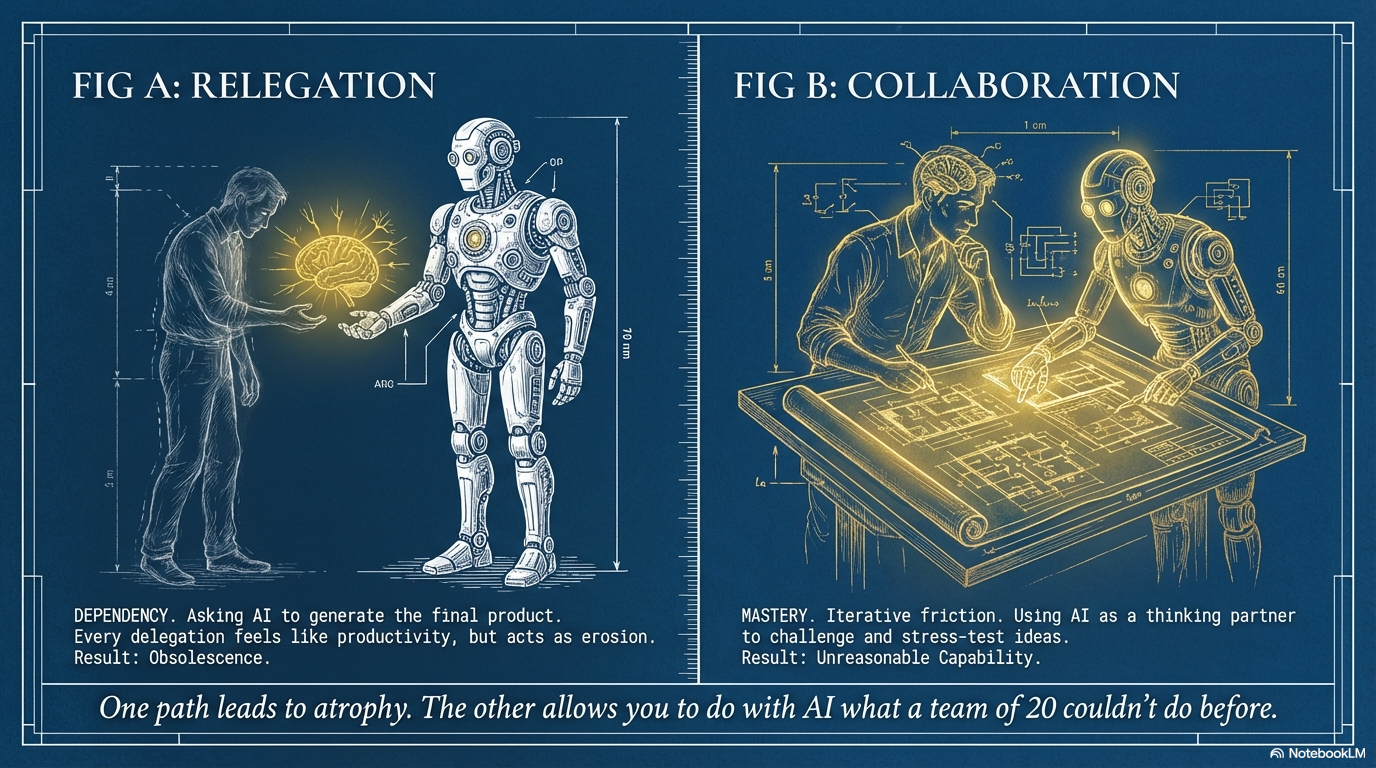

One population asks AI to write the essay. Draft the email. Generate the analysis. They're not building skills. They're outsourcing thinking. Every delegation feels like productivity. It's actually erosion.

The other population jams with it. Back and forth. Iterating. Challenging. Treating it like a thinking partner who happens to have read everything. They're building new cognitive architecture. Capability that didn't exist before.

Bryan's co-author Magnus calls it the difference between "relegating to the robot" and "using the robot as a collaborator."

The first path leads to dependency dressed as efficiency. The second creates what Bryan calls the ability to do "with AI what a team of 20 couldn't do before."

Both paths feel like progress. Only one compounds.

The Uncomfortable Admission

I'll be honest about where I land on this.

Two years ago, research for an article meant days of reading, note-taking, synthesis. Now I can have a rich contextual brief in under an hour. That's genuine capability multiplication.

But I've also noticed something else. When I try to write without AI in the loop, it feels harder than it used to. Is that because I'm attempting more ambitious work? Or because I've trained myself into dependency?

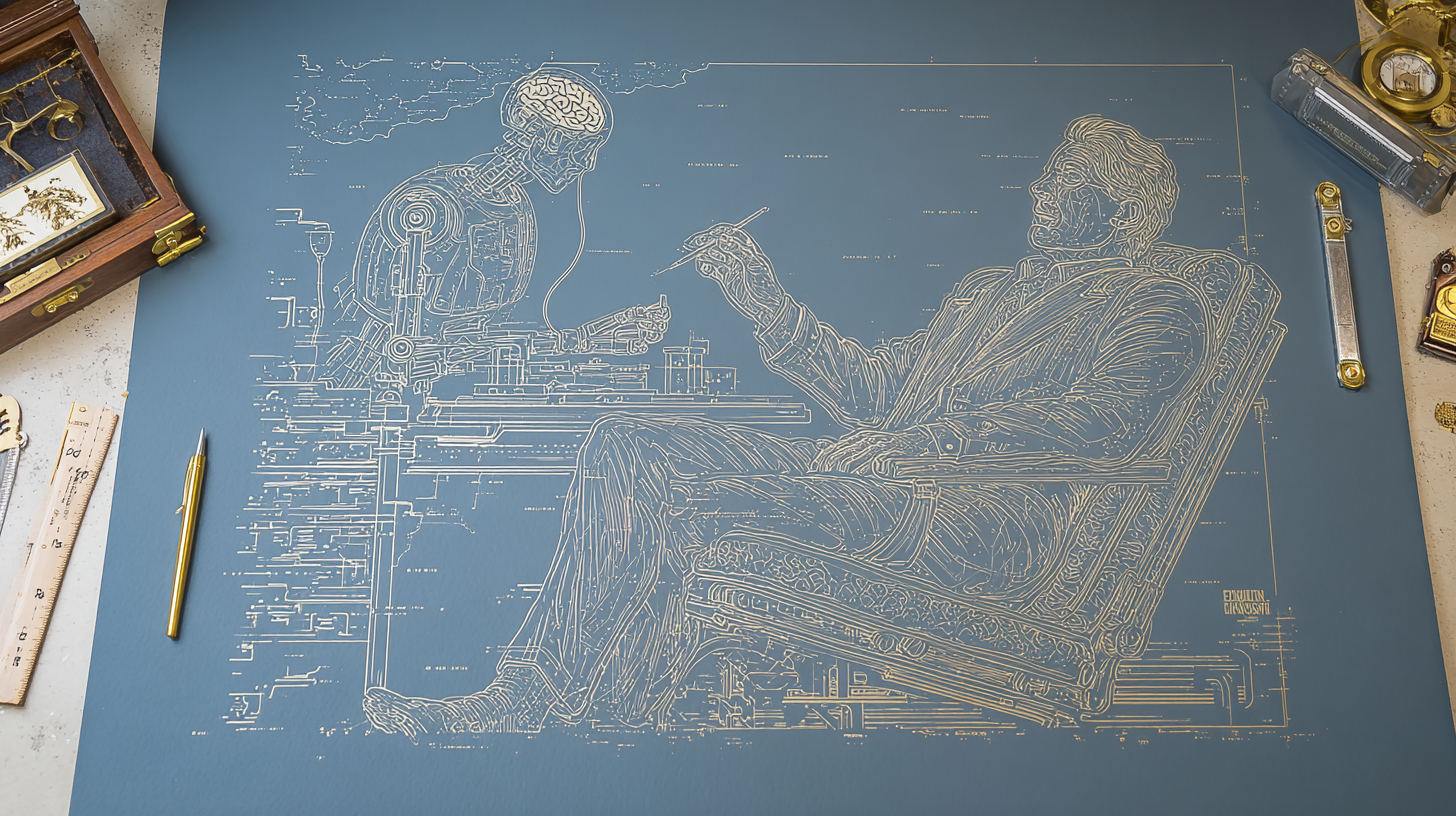

Bryan had a response that stuck: "The two of us are examples of individuals who are leveraging these tools as co-pilots to advance what we're capable of doing. We're not relegating to the robot."

He's right. I think. The difference is intentionality. I use AI to think harder, not to think less. The output quality matters more than the input effort.

But the line is thinner than I'd like to admit.

What This Means For Your Organization

If you're leading a team, here's the strategic question: Which population are you accidentally building?

The high performers in your organization are about to accelerate in ways that seem unfair. They're finding integrations that multiply their unique strengths. Research that would have required hiring is now free. Iteration that required teams now happens in conversation. These people are becoming unreasonably capable.

Meanwhile, another group is quietly hollowing out. They're hitting their metrics. Work is getting done. But the cognitive muscle that made them valuable is atrophying while they feel productive. They're building dependency, not capability.

The gap between these two groups is about to become a canyon.

Bryan's prediction for 2026: "The competitive advantage doesn't come from deploying more AI to replace humans. It comes from deploying AI that enhances the capabilities of their teams."

That's not optimism. That's pattern recognition from watching this dynamic play out across three decades of automation.

The Architecture You're Training

Bryan's advice for navigating this was surprisingly simple: learn to play more.

"There's no textbook. When we're children, we go to sandboxes. We experiment. We try to create things we never imagined. Create safe, low-risk environments to experiment with AI. Ask it questions about things you already know well. That's how you learn whether it's right, whether it's wrong, and where the edges are."

The framing matters. Not "how do we implement AI?" but "how do we build humans who are more capable because AI exists?"

Give your team space to jam with these tools on problems they actually understand. That's how you develop intuition for where AI amplifies versus where it atrophies.

The organizations that get this right will have workforces that compound. The ones that don't will have workforces that plateau while looking busy.

I keep returning to Bryan's closing thought: "We started inventing technology to help us. Not replace us. Maybe it's time to remember that."

The capability paradox isn't inevitable. It's a design choice. You can build systems that make humans more capable, or systems that make humans unnecessary. Both work in the short term.

Only one of them is somewhere you'd want to arrive.

What architecture are you training?