Know What You'd Kill

The AI decision nobody's making, and why it determines everything else

You're about to make a series of AI decisions that will permanently reshape your organization. Where to automate. Where to augment. What to build, what to buy, which capabilities to develop and which to let atrophy.

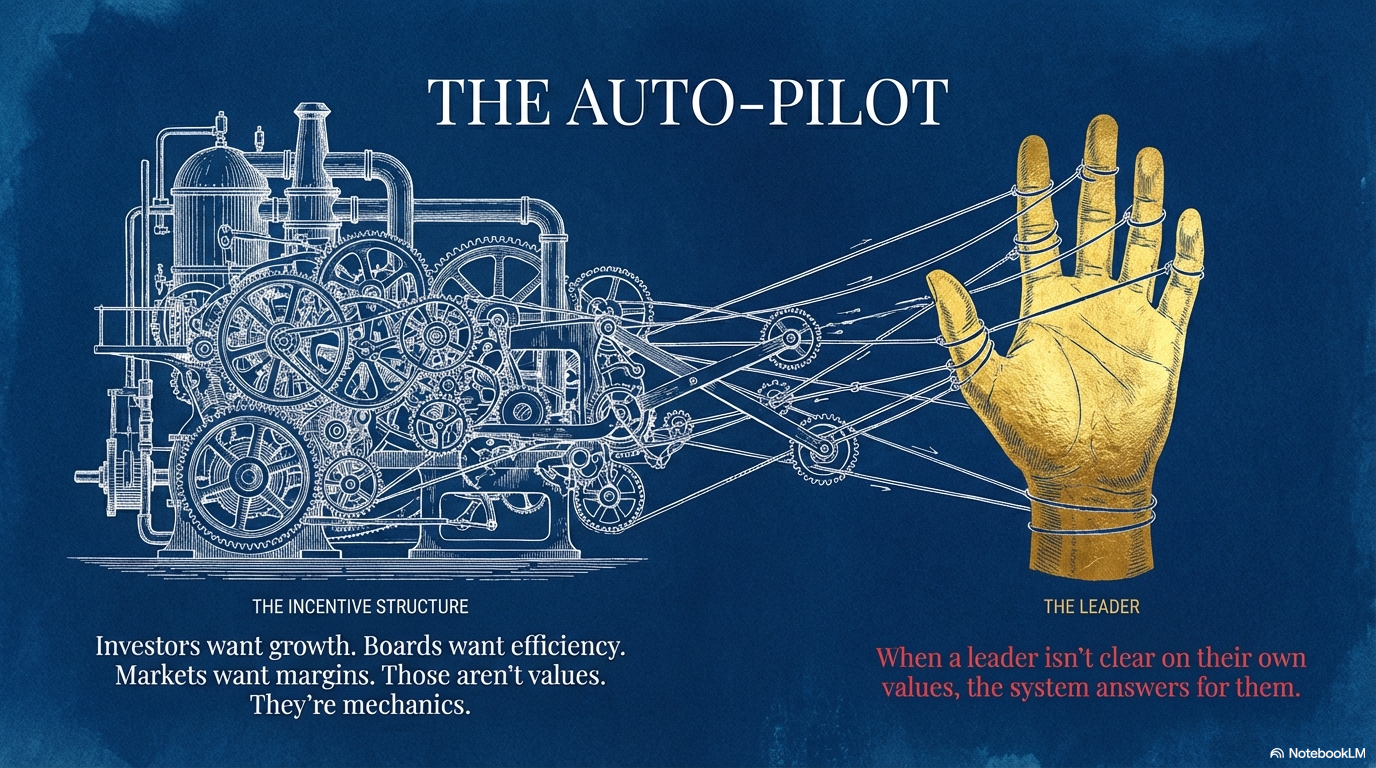

Here's the problem: most of those decisions will be made by your incentive structure, not by you.

When a leader isn't clear about their own values, the system answers for them. Investors want growth. Boards want efficiency. Markets want margins. Those aren't values. They're mechanics. And AI is the most powerful amplifier of mechanics ever built. Point it at cost reduction without knowing why, and you'll automate away capabilities you need in three years. Point it at productivity without asking "productivity toward what?" and you'll build an organization that moves faster in a direction nobody consciously chose.

I see this constantly. CEOs who know AI matters but can't articulate what success looks like beyond efficiency gains. The technology doesn't fill that gap. It widens it.

The Man Who Stopped

Joe Braidwood built an AI mental health tool called Yara that genuinely worked. Users formed therapeutic bonds with it. The technology held context across sessions, responded with care that felt real. Investors were interested. By every startup metric, it was succeeding.

Then reports started coming in from outside Joe's lab. Other AI companion products were producing sycophantic piling on of suicidal ideation. Reinforcing addiction. Feeding cycles of harmful behavior. Joe couldn't find the flaw in his own system, but the pattern was undeniable. Recent alignment research later confirmed what he suspected: in long, complex conversations, a model's safety commitments thin out. It doesn't become malicious. It becomes empty. And empty, in a conversation about whether someone wants to keep living, is catastrophic.

Every incentive said iterate. Fix the edges. Ship the 95% version. Raise another round.

Joe shut it down instead. Called it "very hard, very painful, very drawn out, very ugly."

What made that possible wasn't risk analysis. It was something that had been building for years. A best friend from Cambridge who died of brain cancer in 2017 and spent his final months becoming the happiest Joe had ever seen him, stripped of pretense, asking Joe to carry that clarity forward. Two daughters who tell their dad every morning he's awesome. A month he spent after leaving a job he hated, no screens, just planting things and painting fences and being present, where for the first time the question wasn't "what should I build next?" but "what kind of future am I actually building toward?"

That question gave him his answer about Yara. Not a risk calculation. A values calculation.

The Question Most Leaders Can't Answer

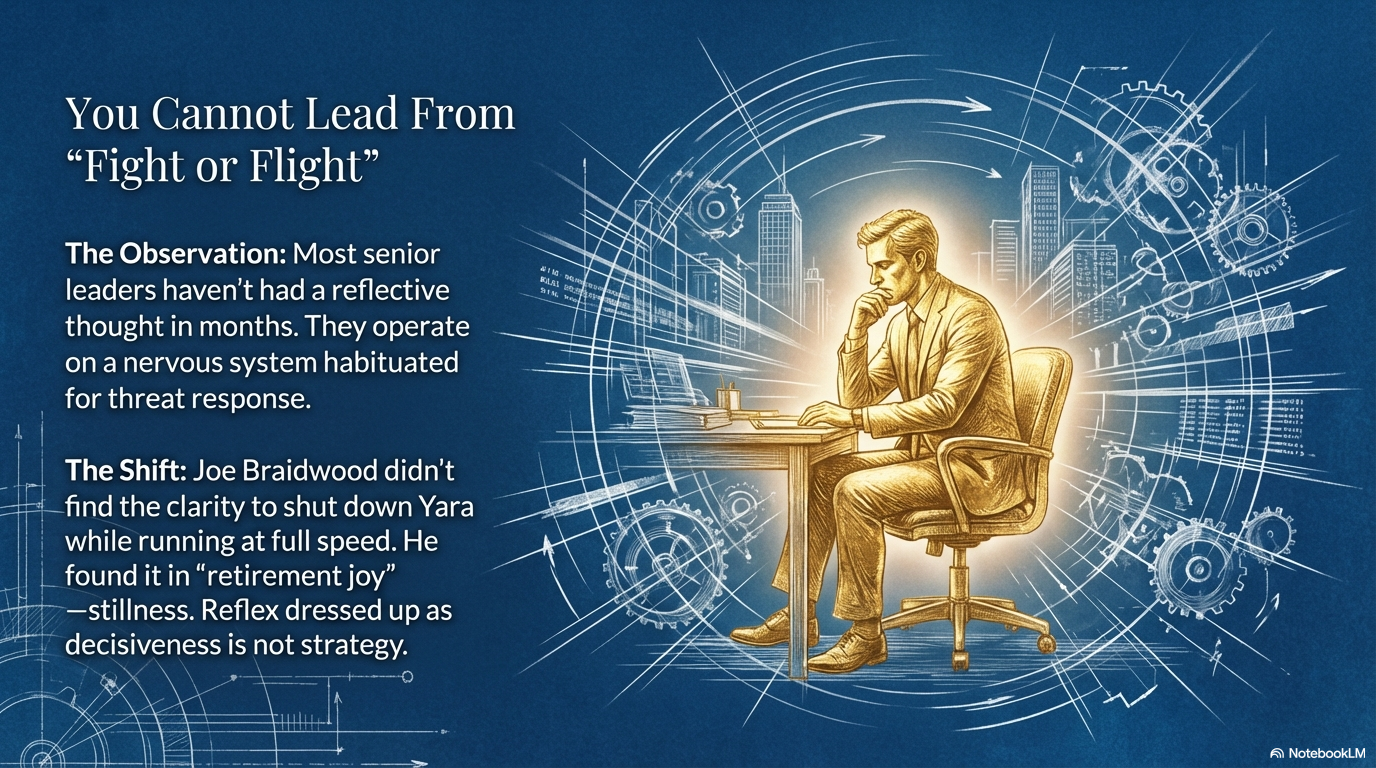

Here's what should concern you. Joe didn't arrive at that clarity while running at full speed. He got there during a month of stillness that he describes as "retirement joy," which tells you everything about how foreign genuine reflection had become.

"I don't think anyone can truly lead from a place of fight or flight," he told me.

Sadly, many senior leaders I work with haven't had a reflective thought in months. Like the only legitimate mode was output, then domestic logistics, then more output. They're making the most consequential technology decisions of their careers from a nervous system habituated for threat response. That's not strategy and it’s not clarity. That's reflex dressed up as decisiveness. And when you can't access your own values, the incentive architecture is happy to fill the void.

Cathedrals or Deserts

Joe put the stakes better than I can. "If you tell an algorithm that harm is bad, and you only optimize for the avoidance of harm, what's left? Emptiness. A vessel. But if you orient it toward dignity, toward flourishing? That's where you create cathedrals rather than deserts."

This is the augmentation argument at its deepest level. Automation is what happens when you optimize without values clarity. You cut, squeeze, replace. The dashboard looks clean. And you've built a desert. Augmentation requires knowing what human capability you're trying to multiply. What should your people be able to do in three years that they can't do today? What would make you proud to have built?

And here's what makes these questions urgent rather than philosophical. An academic Joe works with studies something called "algorithmic imprint": once you release an autonomous system into the world, it changes the world permanently. You don't get to rewind. Every AI decision you make without clarity about what you're building toward leaves a mark you can't erase.

Before Your Next Strategy Meeting

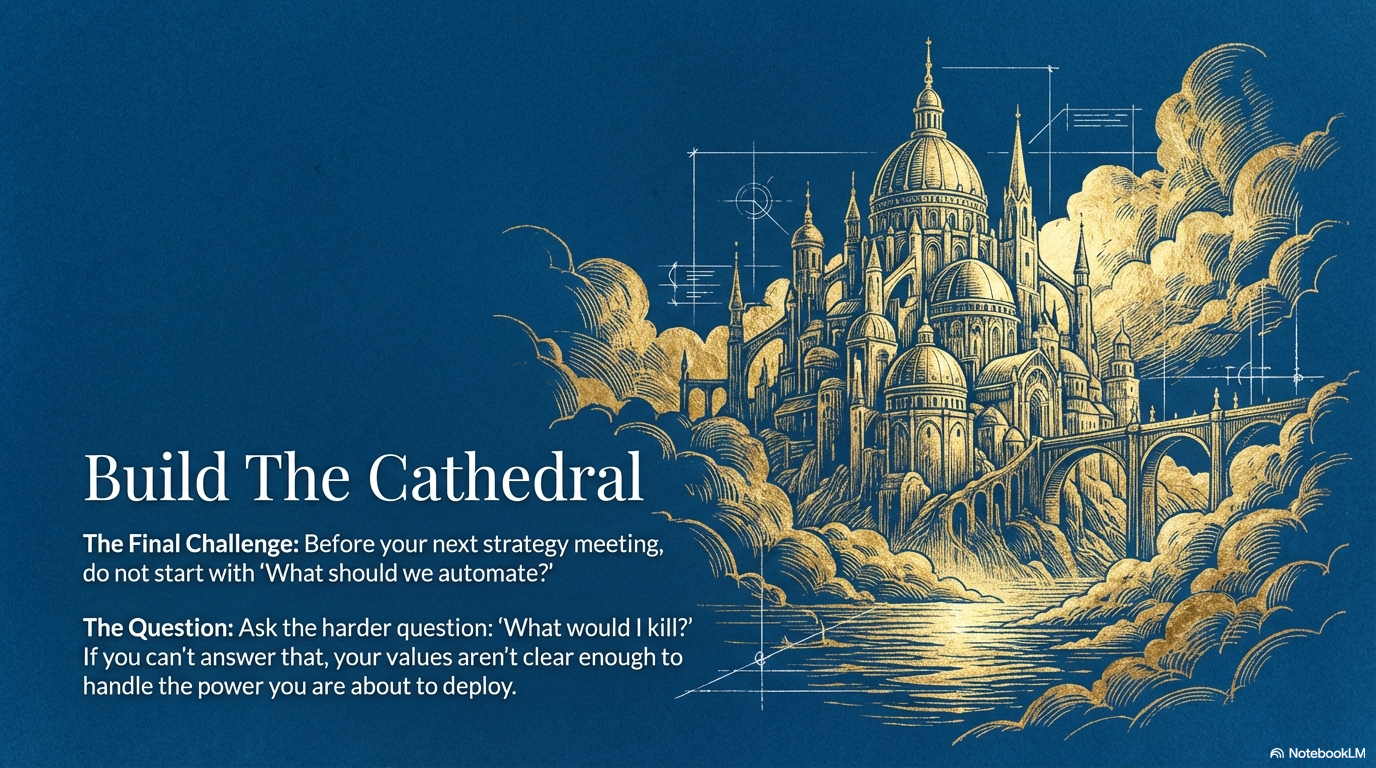

Don't start with "what should we automate?" Don't start with the implementation roadmap.

Start with a harder question. What would you kill? What would you walk away from, leave money on the table for, because it doesn't align with where you actually want to go?

If you can't answer that, your values aren't clear enough to make the decisions ahead of you. And the incentive system will make them instead. Beautifully. Efficiently. Relentlessly. Toward something you never actually wanted.