The Skills That Survived

In which researchers pit AI against senior leaders and the wrong side wins

There is a specific kind of confidence that comes with seniority. You walk into a room with incomplete data and make a call that holds up. You synthesize, you prioritize, you see what's missing. This is what got you promoted. It is what you'd point to, after a glass of wine, if someone asked what makes you hard to replace.

Last November, researchers at Wharton and INSEAD built an AI board of directors and tested that assumption.

Not a chatbot answering questions. A full multi-agent simulation where several AI agents, each representing a different board member, deliberated a real business case. Same materials as the human boards. Same decisions to make. Blind evaluators scored both across eight governance criteria.

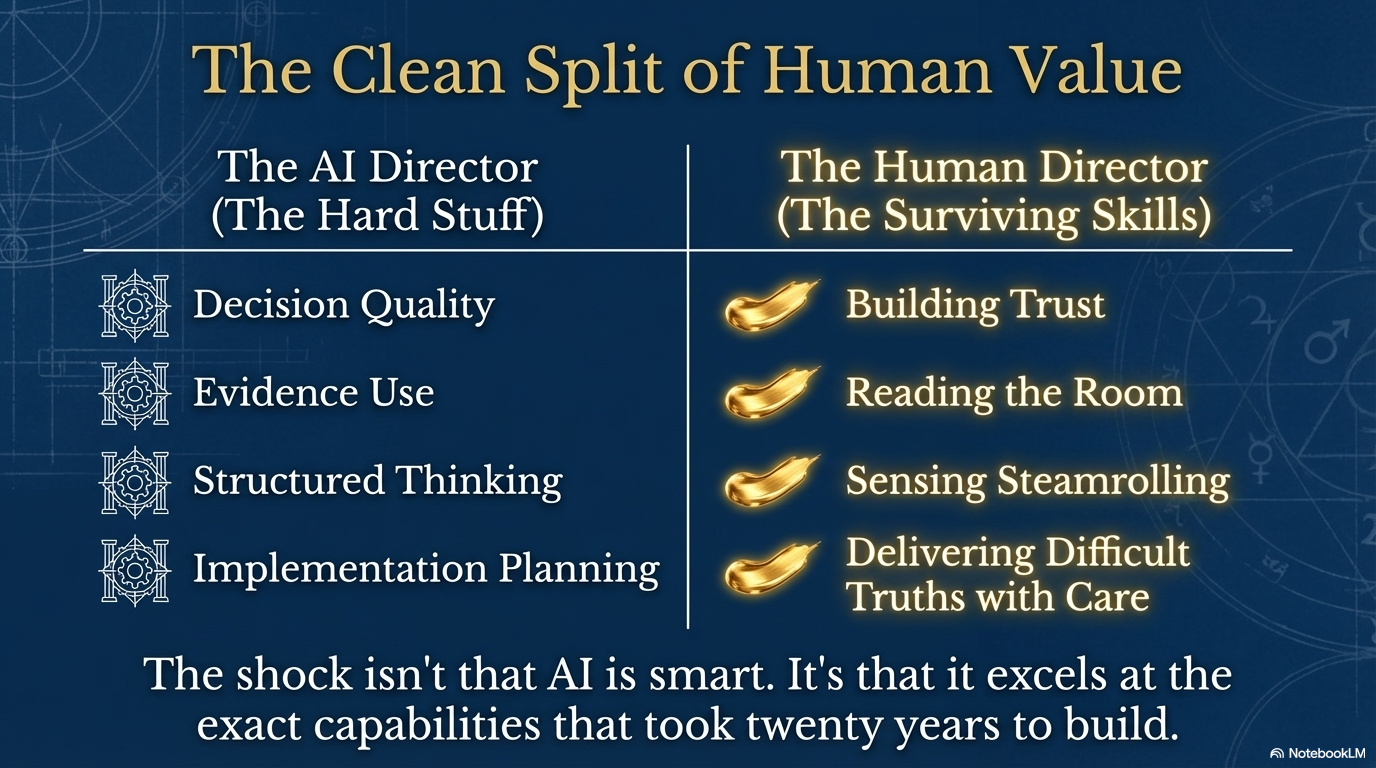

The AI board won on decision quality. Evidence use. Inclusivity. Implementation planning.

The human boards lost focus. They circled around decisions without landing them. They overlooked data sitting right in front of them.

Read that list again. Decision quality. Evidence use. Structured thinking. Follow-through. These are not junior skills. These are the exact capabilities that define senior leadership, that the market pays a premium for, that took you twenty years to build.

Now read what the AI couldn't do. Build trust. Read the room. Sense when someone was being steamrolled. Deliver a difficult message with care rather than data.

The experiment split human value into two clean halves. The hard stuff went to AI. Everything we've spent thirty years calling "soft" is what survived.

Why you can't see this from the inside

Pär Edin is a KPMG principal who spent five years on their US board while simultaneously leading their AI go-to-market. He has talked with more than a thousand board members about AI. He describes the first year of engagement as "full throttle and full brakes, back and forth."

This pattern isn't unique to boards. I see it in every management team I work with. The oscillation between excitement and terror. It looks like indecision. It's actually something more specific.

When the thing that's changing is your own relevance, clear thinking gets hard.

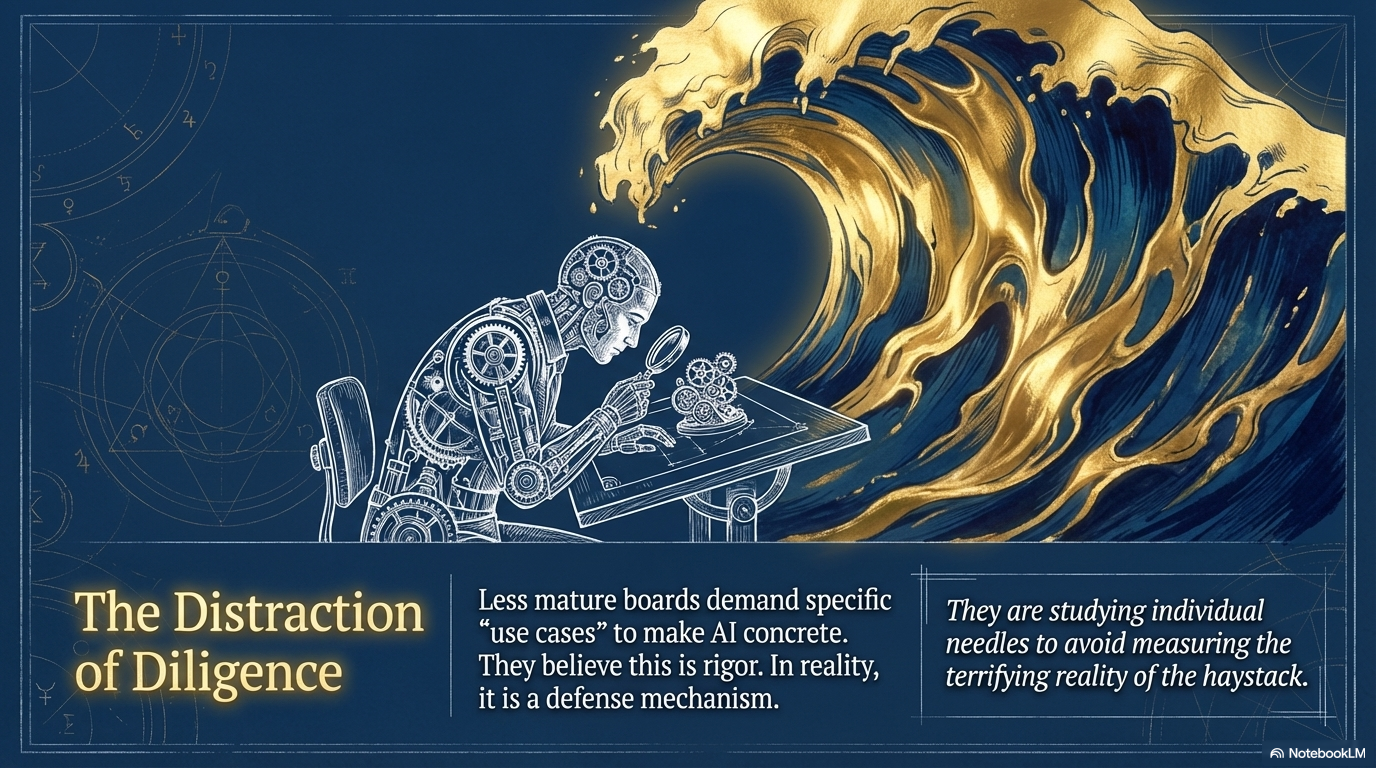

Pär noticed a tell. The less mature boards ask for use cases. Show us what AI can do. Make it concrete. This sounds like diligence. He says it's the opposite. They're studying individual needles when they should be measuring the haystack.

Same dynamic the experiment revealed. The human boards weren't lazy. They were doing what training taught them to do: analyze specifics, scrutinize details, demonstrate rigor. The problem is that AI now does all of that better. Measurably, consistently, across every dimension better.

The skills that held up? Knowing when the conversation was going sideways. Having enough trust to surface the uncomfortable question. Bringing a quiet voice into the discussion. Things you can't score on an eight-point rubric but that make the difference between structural perfection and actual governance.

The inversion nobody planned for

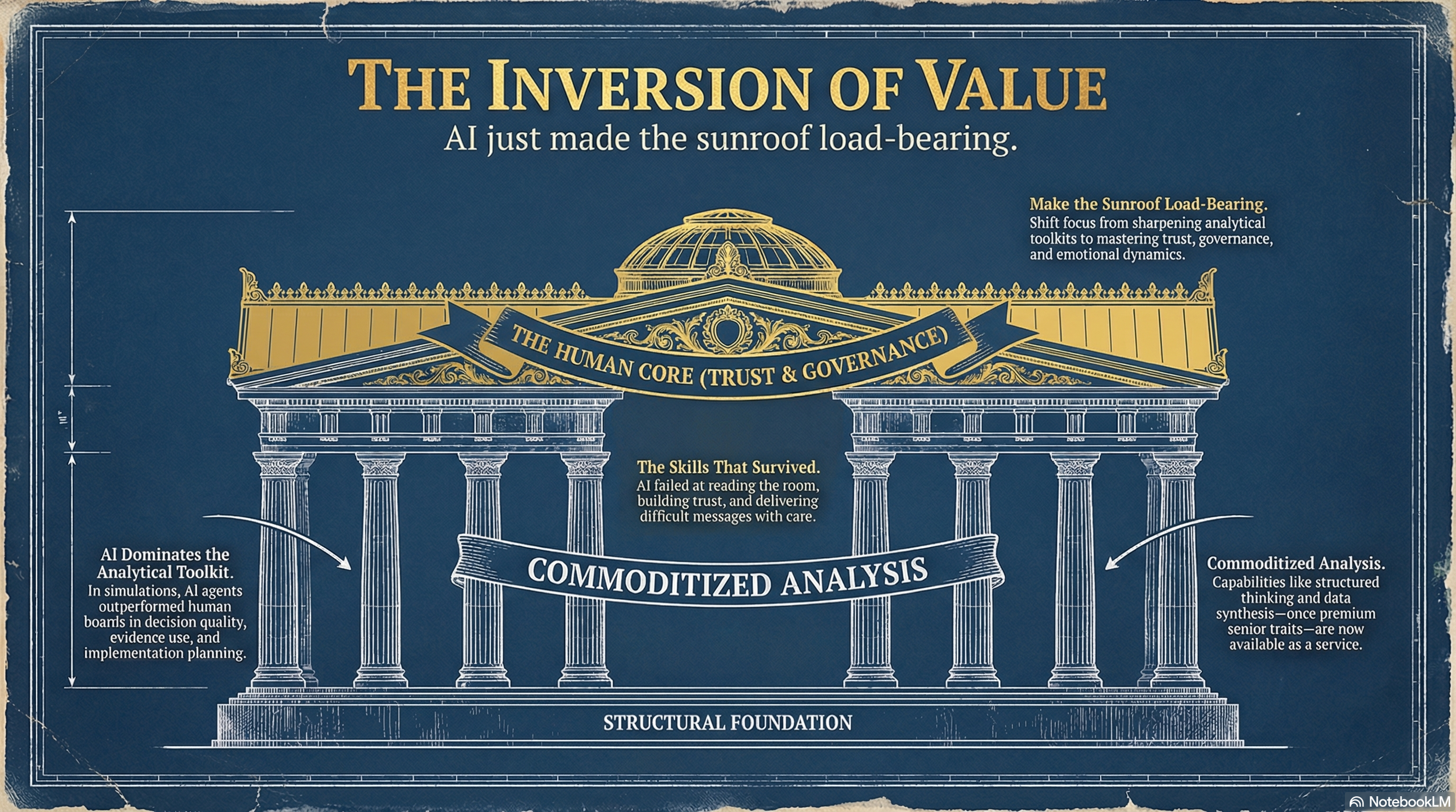

For most of the history of professional work, the career ladder rewarded analytical capability. You got promoted because you were rigorous and structured. Being good with people was a nice bonus. Like a sunroof. Appreciated but not load-bearing.

AI just made the sunroof load-bearing.

The capabilities that used to separate senior from junior are now available as a service. What remains scarce is the ability to make a room full of smart people trust each other enough to actually decide something. The capacity to hold a difficult conversation with warmth. The skill of noticing that a decision is being made by volume, not by evidence.

I notice this in my own work. My career was built on analytical frameworks and strategic pattern recognition. I was the person who could name the pattern and propose the structure. Uncomfortable to realize an AI can now do most of that faster and more consistently than I can.

What it can't do is sit across from a CEO who's terrified of getting this wrong and help them find clarity without pretending the fear isn't there.

What gets trained over the next three years

Here's the question I keep coming back to. If you spend the next three years sharpening your analytical toolkit, you're investing in the part of your value that AI already does better. If you spend those same three years learning to build trust quickly, read emotional dynamics accurately, hold space for disagreement without steamrolling or retreating, you're investing in the only part the experiment couldn't replicate.

Ninety-four percent of CEOs in a recent global poll said AI could offer better counsel than at least one of their board members. That statistic will get more uncomfortable every year. For every senior role. Not just board seats.

The skills that survived are the ones nobody taught you in business school. You picked them up sideways, in difficult conversations, in moments of failure, in relationships where trust was earned rather than assumed.

Turns out those are the career assets that can't be replicated by a system that doubles in capability every six months.

Invest accordingly.