The Only Asset AI Can't Build

Your AI strategy is sending your employees a message about what you believe humans are for. Most companies have no idea what it says.

By this point (April 2026), most companies I know are running some version of an AI strategy. Very few of them realize they're also running a communications strategy, aimed at their own workforce, about what they think people are worth.

This is not a metaphor.

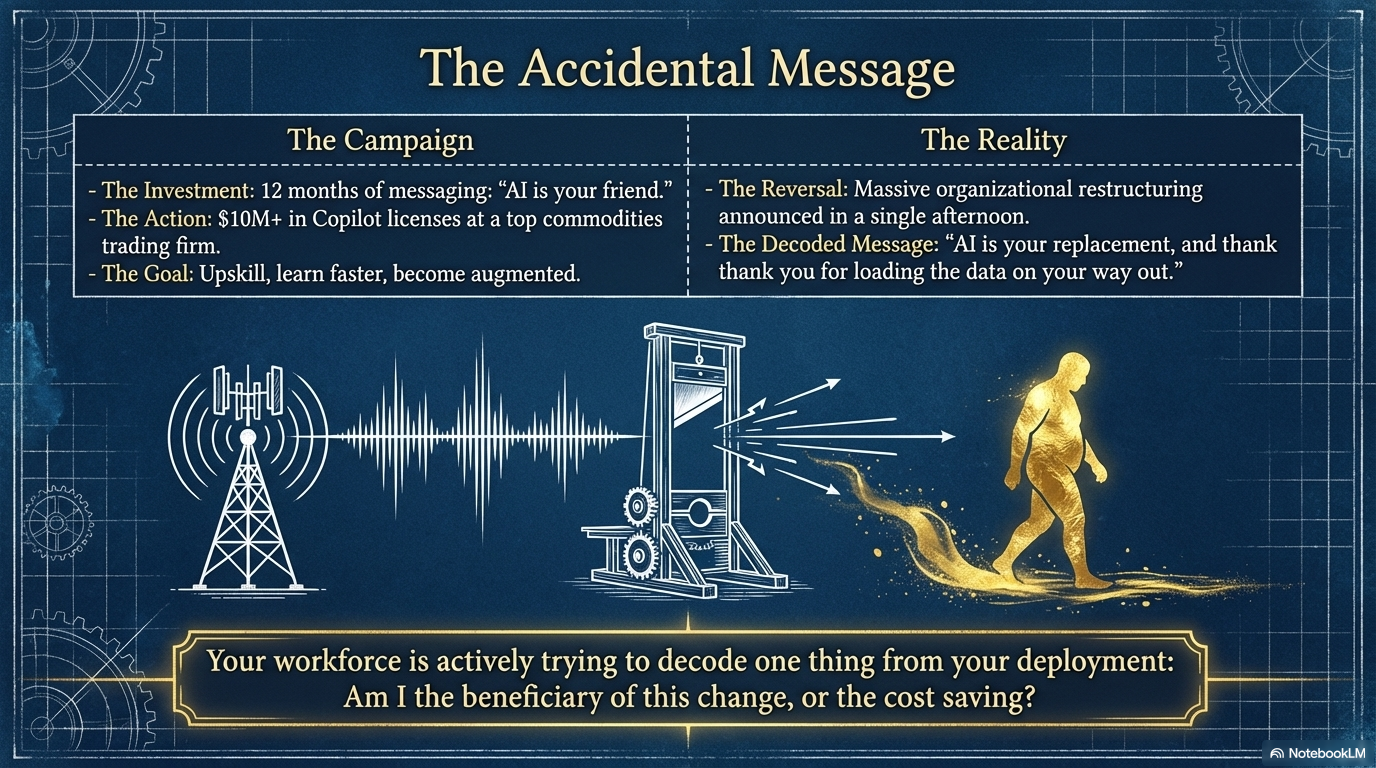

It is happening in your building right now, whether you've approved the messaging or not. Every tool you deploy, every role you restructure, every all-hands where you say "AI will make us stronger" is being decoded by a thousand people trying to figure out one thing: am I the beneficiary of this change, or the cost saving?

Peter Whaley, a former EY equity partner and author of Lead with AI, Stay Human, told me a story on ThinkRoom recently that I haven't been able to shake. A commodities trading firm had spent a year telling its people that AI would make them better. Use it more. Track your usage. Learn faster. They'd invested over ten million in Copilot licenses. They'd hired Peter to come in and talk about learning, trust, self-improvement.

Two days before his presentation, they called. Cancel everything. Massive restructuring.

For twelve months, the message had been: AI is your friend. The actual message, delivered in a single afternoon, was: AI is your replacement, and thank you for loading the data on your way out.

Peter is still waiting for that speaking slot. The company, I suspect, is still waiting for its culture to recover. One of those waits will end first, and I wouldn't bet on the company.

The Pattern Under the Pattern

I've been thinking about why this keeps happening, and not just at one commodities firm. Peter shared story after story on the podcast, each one a variation on the same theme.

Technology changes, human nature doesn't: people will not change their behavior inside systems they don't trust.

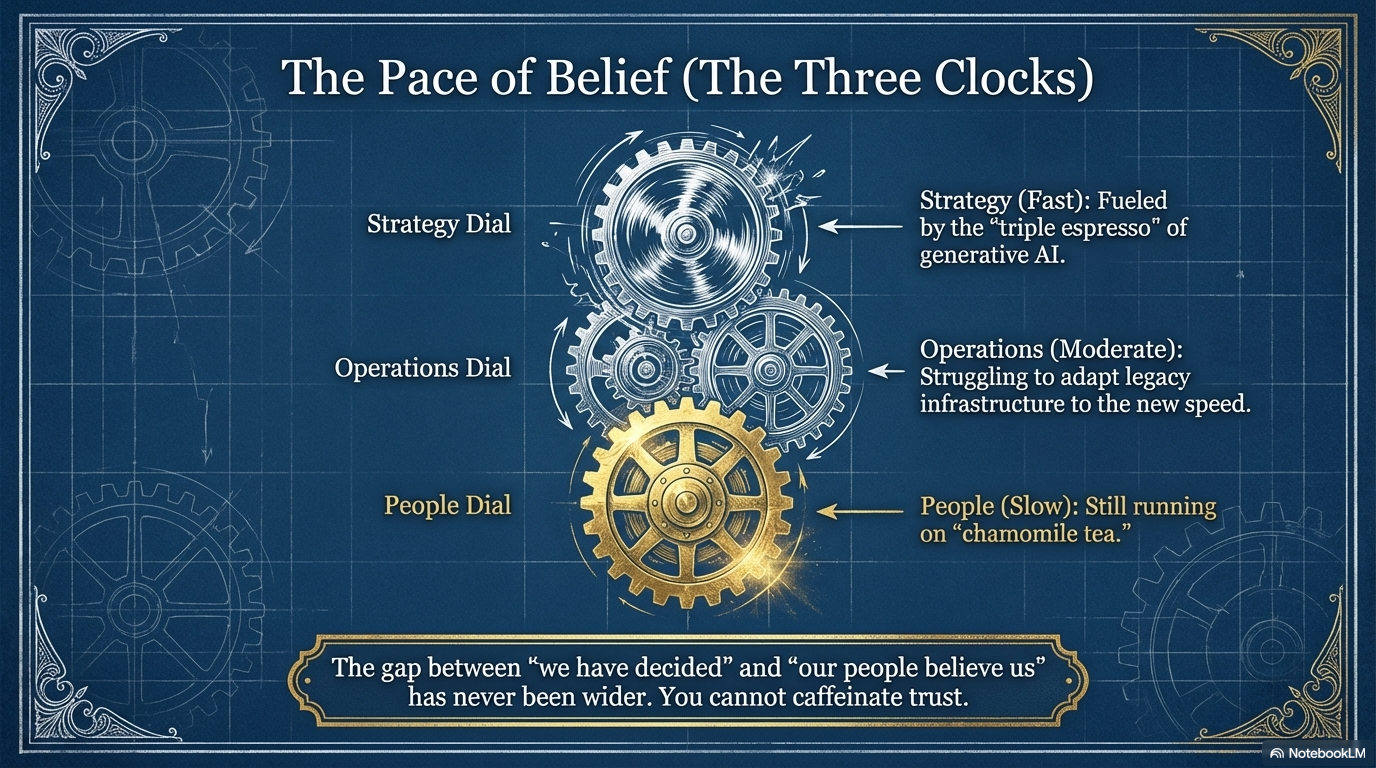

Which brings me to something Peter calls the three clocks.

Strategy moves fast.

Operations move slower.

People move slowest of all.

The problem is not that organizations don't understand this. Most leaders, if you cornered them at a dinner party, would nod along. The problem is that AI has given the strategy clock a triple espresso while the people clock is still on chamomile tea. The gap between "we've decided" and "people believe us" has never been wider.

The Imagination Test

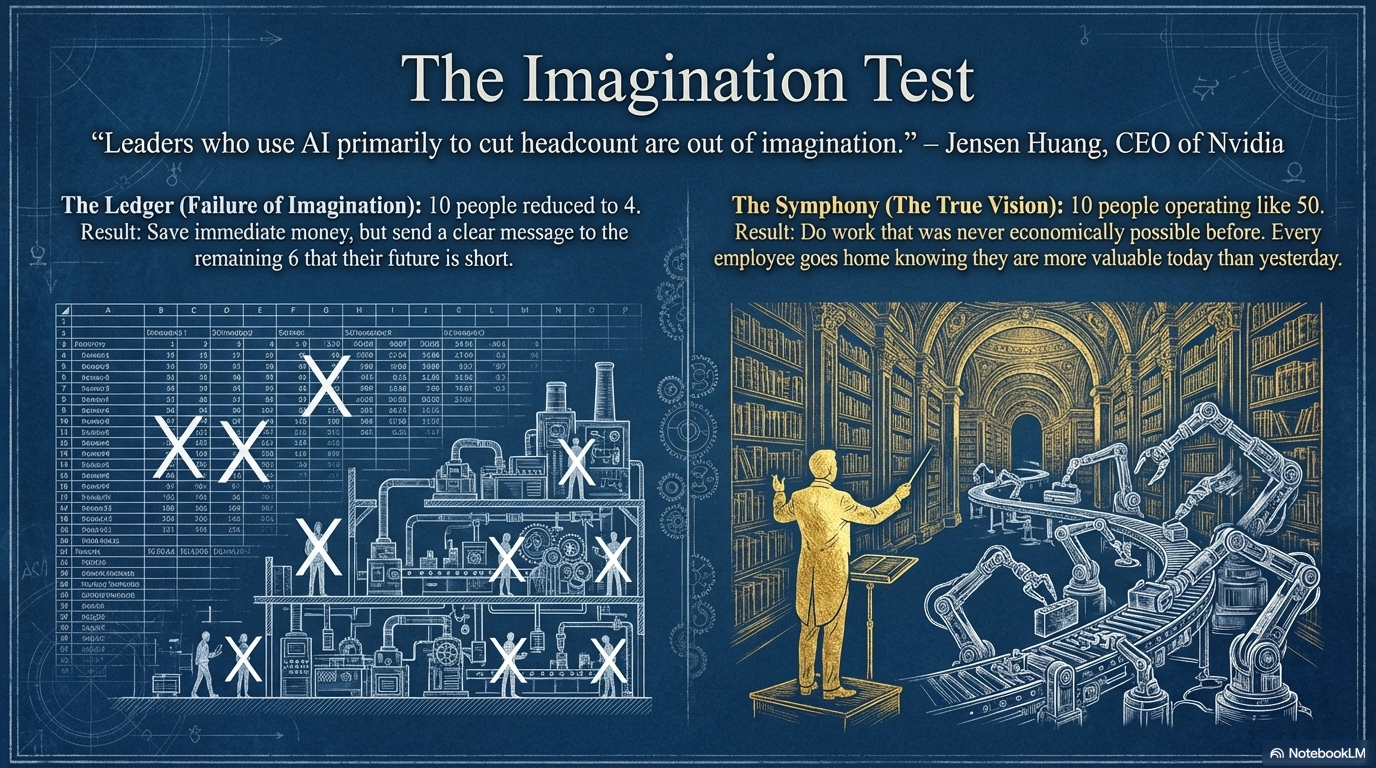

Jensen Huang said something at GTC a few weeks ago that I think is the most important sentence any CEO has uttered about AI this year. The man who sells the chips told the people buying them that leaders who use AI primarily to cut headcount are, in his words, "out of imagination."

He wants to double Nvidia's headcount. His vision: one person managing a hundred AI agents, not a hundred people replaced by agents.

Sit with that for a second. The CEO of the company that profits most from AI adoption is telling his own customers they're using his product wrong.

In one version of AI strategy, you had ten people and now you need four. You've saved money and sent a very clear message to the remaining six about what their future looks like (short). In the other version, your ten people now operate like fifty, doing work that was never economically possible before, and every one of them goes home knowing they're more valuable today than yesterday.

Same technology. Same chips. Same quarterly earnings pressure. Completely different message to your workforce about what you believe humans are for.

The Doorman Problem

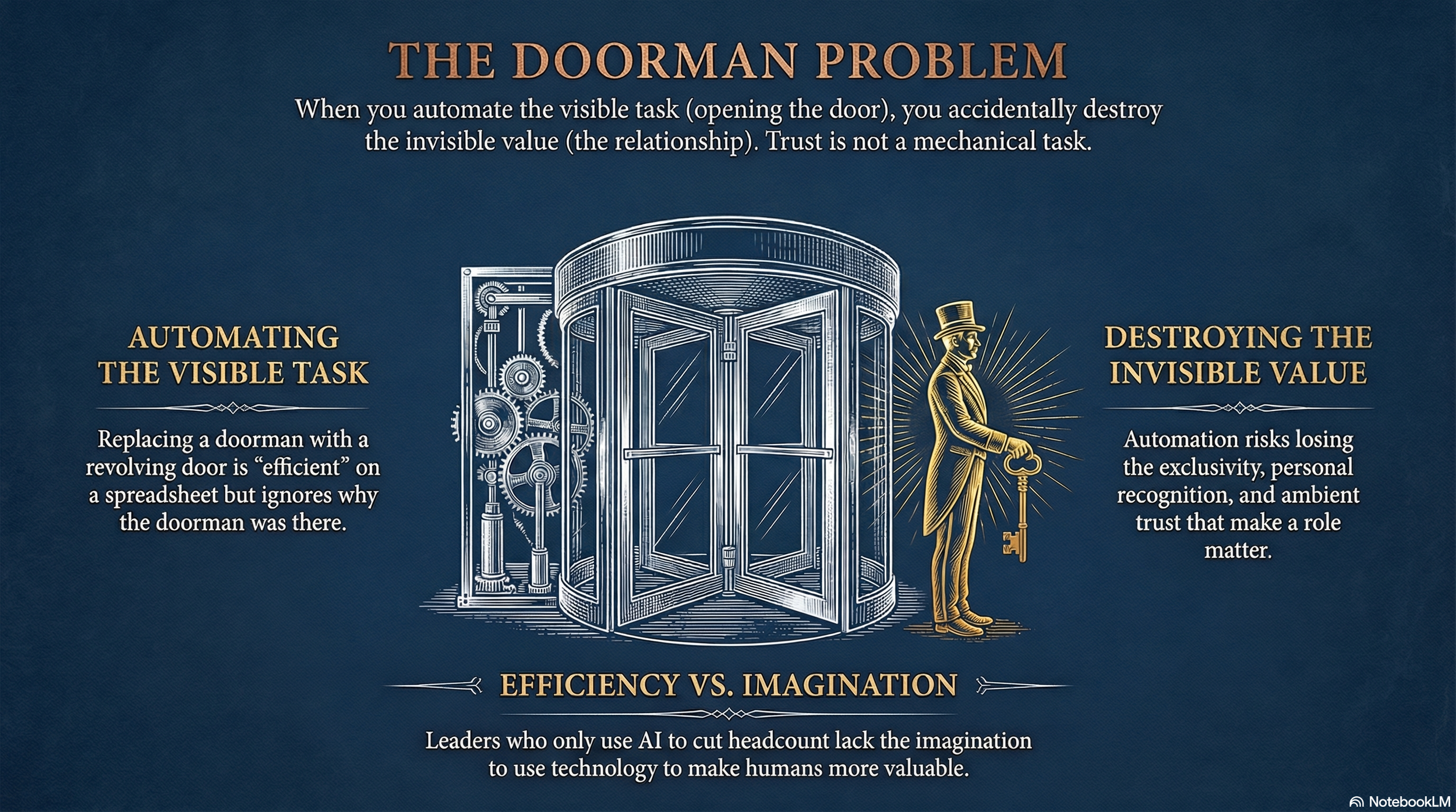

Then we moved on to Rory Sutherland's doorman example, and I think it's the cleanest diagnostic for what's going wrong. When cost-cutting consultants look at a hotel doorman, they see someone who opens doors. So they install a revolving door and pocket the savings. Efficient. Measurable. Defensible on a spreadsheet.

But the doorman was never about the door. It was exclusivity signaled at the threshold. Returning guests greeted by name. An ambient sense of being looked after that makes the difference between a hotel and a building with beds.

This is happening at industrial scale right now. Companies auditing roles, identifying the "door-opening" tasks, automating them, and accidentally destroying the invisible value that made the role matter. The institutional memory. The client relationships. The judgment that took fifteen years to build and can walk out the door in fifteen minutes.

Peter told me about a graphic designer friend who perfectly illustrates the flip side. Peter built his book cover with AI. Got ninety percent there. Could not crack the last ten. His designer friend finished it in a weekend. That ten percent, the taste, the judgment, the thing a client actually came to you for, is now the whole game.

The question for every leader is: are you building a company that values the ten percent? Or are you one restructuring announcement away from watching it walk out?

The Practical Bit

I've had four CEO conversations in the last two weeks that all circled the same question: are we winners or losers from AI? Every one had been fielding board pressure for months. Every one admitted they'd been improvising answers.

None of them were asking what I think is the prior question: would our people say this strategy is being done for them or to them?

Here's a concrete way to find out. Pick your most recent AI initiative. Now imagine you're a mid-level manager in that department. You've seen the announcement. You've heard the all-hands. You've watched the consultants arrive.

What message did you actually receive? Not the one on the slide. The one you decoded from watching what happened next. Did people get promoted for experimenting? Or tracked for compliance? Did the freed-up time become space for higher-value work? Or did headcount quietly shrink? Did leadership say "this will make you better" and then behave as if that were true?

The gap between the intended message and the received message is your trust deficit. And that deficit is the single best predictor of whether your AI investment will compound or collapse.

Because every AI strategy is, underneath everything, a statement about what you believe humans are for.

Most companies are accidentally saying: less.

The ones that will still be here in ten years are saying: more, but different. And their people believe them.