Engineers Went First. You're Not Far Behind.

What software teams learned about AI that every profession will learn the hard way.

For several years, I ran the engineering department of a software company. I should tell you straight away that I am not an engineer. Fredrik Colldahl Scherstén was the actual engineer. He was my CTO, which is a polite way of saying he understood what was happening and I understood why it mattered, and between the two of us we managed to keep things moving in approximately the right direction.

I mention this because being the non-technical person in a room full of engineers during an AI transformation is an extraordinarily useful vantage point. You notice things that the people inside the change can't see, mostly because they're too busy living through an identity crisis to describe it clearly.

I’ll give you an example:

For years, we talked about adding a dark mode to our application. If you don't know what that means, it's the option to make your screen dark instead of blindingly white, and if that sounds simple, I thought so too. Fredrik and his senior engineers estimated it at weeks of work. The styling system would need restructuring. Legacy code would need updating. Architecture decisions would need revisiting. It was one of those features that lived permanently on the roadmap under the heading "would be nice, won't happen."

Then one afternoon last autumn, I posted a slightly obnoxious comment in our Slack channel. Something like: shouldn't we just ask the AI to do this? One of our engineers, Markus, who has a habit of coding at hours when reasonable people are sleeping, picked it up that evening. By morning, we had a complete dark mode across the entire application.

Fredrik and I sat with that for a moment. This was a feature that two senior engineers had estimated at weeks. It had taken one evening. Not because Markus worked faster. Because the process that used to require weeks of human coordination had, for reasons I'll get to shortly, collapsed into something fundamentally different.

That word, collapsed, is important. I don't mean it improved or accelerated. I mean the stages stopped being stages. Requirements, design, implementation, review. They folded into each other like a concertina and what fell out the other end was a finished feature and two engineers staring at their screens wondering what exactly their jobs were now.

This is where the story gets interesting. Not for engineers (they already know). For everyone else.

The accidental preparation

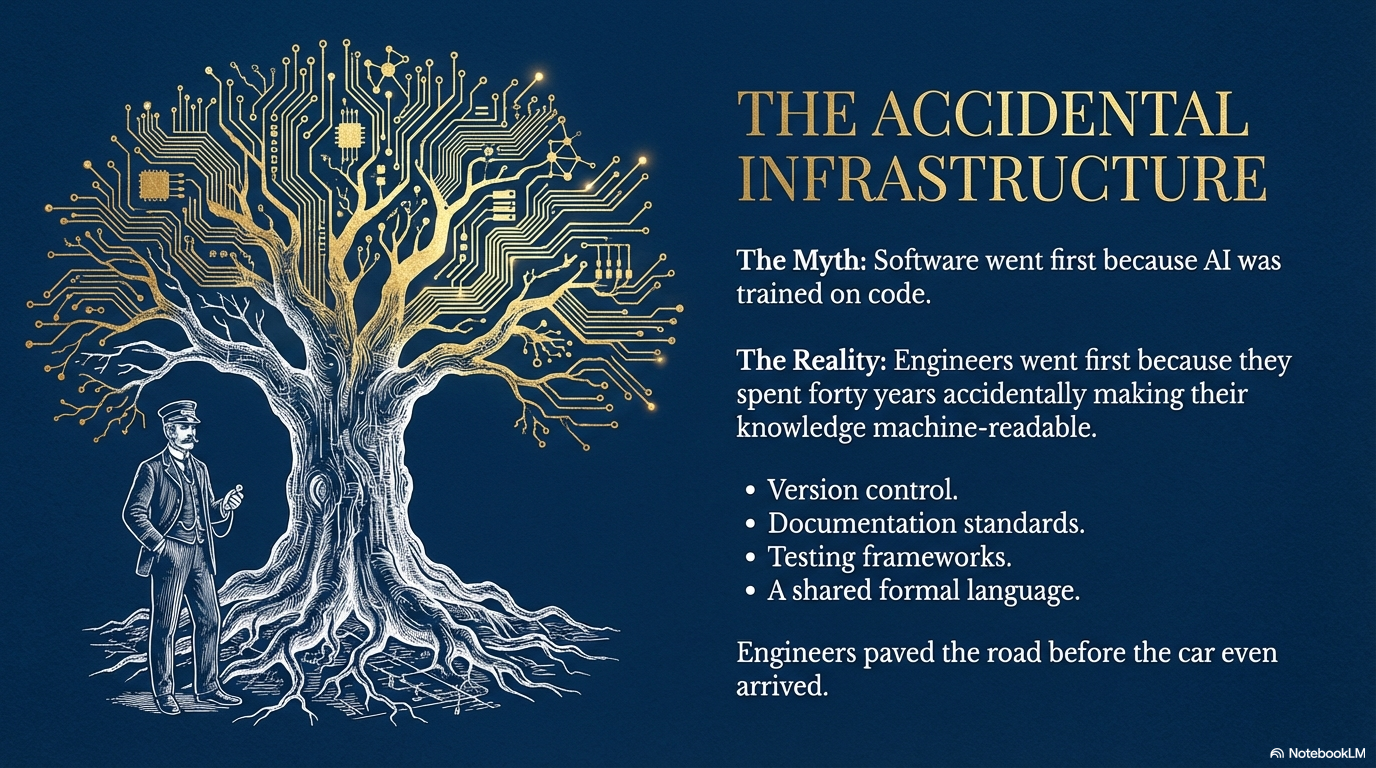

The conventional explanation for why software was first goes like this: AI was trained on vast amounts of code, so naturally it got good at code first. This is true in the way that saying humans need oxygen is true. Correct, but it skips the interesting part.

What engineers actually had, and what nobody else has, is forty years of accidentally making their knowledge machine-readable.

Think about what existed in a modern software team before AI showed up. Version control tracking every change ever made. Documentation standards. Testing frameworks. Design tools with exportable specifications. A shared, formal language for describing what exists, what should exist, and precisely where the gap is between those two things.

No one built any of this for AI. Engineers built it for themselves, to manage complexity across teams and years. But the effect was identical. By the time AI arrived, it walked into a profession that had spent four decades building exactly the infrastructure needed to make AI useful.

Fredrik described the breakthrough moment, and it wasn't a better model. They'd been testing AI tools for months with underwhelming results. The shift came when they connected the AI to the full context: the existing codebase, the Figma design files, the requirement specifications. When the AI could see the whole picture rather than answering questions about isolated snippets, everything changed.

Early AI for engineers was, as Fredrik put it, a smarter Stack Overflow. You paste a fragment, ask what's wrong, get an answer. Useful in the way that a torch is useful if you need to cross a dark room. But if someone turns on the lights, the torch becomes a bit beside the point.

Context was the light switch.

The archaeology problem

Now here is the uncomfortable question. Think about your profession, whatever it is.

Do you have the equivalent of a codebase? A single, structured, machine-readable repository of everything your organisation knows about how it operates? The accumulated decisions, the institutional memory, the "we tried that in 2019 and here's exactly what happened"?

You almost certainly don't. You have it scattered across email threads, shared drives with folder structures that reflect three different organisational charts, meeting notes nobody reads, and the heads of people who might leave next quarter. You have plenty of context. But it isn't infrastructure. It's archaeology.

To understand what this means in practice, consider what a good salesperson actually knows. They know their product's real selling points, not the ones on the website, the ones that close deals. They know their ideal customer. They know what the last twenty proposals looked like and which ones won. They know the pricing logic, the discount thresholds, when to push and when to hold. They know the competitors: what the client saw in the last vendor demo and what bothered them about it. They know the compliance requirements. They know the tone that works in a formal tender versus an email to someone they've known for six years.

All of that is context. And almost none of it is written down anywhere an AI can reach.

So when a company gives its sales team access to AI and says "use this to write proposals," here is what actually happens. The AI, which is perfectly intelligent but knows nothing about this company, this market, or this client, produces something that reads like a competent stranger's best guess. Which is exactly what it is. The salesperson spends forty-five minutes fixing it, wonders why they bothered, and goes back to doing it the old way.

Now picture the same salesperson who has spent a few weeks doing the unglamorous work. Documenting the ICP. Feeding in winning proposals. Writing down pricing structures and competitive positioning. Capturing the brand voice. Recording the compliance rules that govern what can and cannot be promised.

The AI doesn't become incrementally better. It becomes categorically different. It writes a proposal that sounds like a senior colleague who has been paying close attention for years, because in a meaningful sense, it has been. It flags that this prospect evaluated a competitor last quarter and lost a deal over implementation timelines, so it emphasises your onboarding speed. It knows this industry requires certain regulatory language and includes it without being asked.

The same model. The same subscription cost. The reason the output is unrecognisable is that AI doesn't work the way most people think. It doesn't have fixed capabilities that improve when a new version comes out, like updating an app. It has nearly unlimited capability that scales with what it knows about your situation. Every piece of context doesn't just add information. It changes which abilities become relevant. Give it nothing and you get a gifted generalist. Give it everything and you get something closer to the best person on your team on their best day, working at a speed that isn't physically possible.

This is why most AI deployments are disappointing. Companies buy licenses, hand everyone a chatbot, and call it transformation. The AI is already good enough. It has been for a while. The bottleneck was never capability. It was context.

I discovered this myself in an unexpected setting. I moved to Spain recently, where administrative processes appear to have been designed before widespread adoption of the electric light. I've been dealing with lawyers on immigration paperwork, so I trained AI agents on Spanish legal frameworks, our family's financial situation, all the relevant specifics. Those agents answer my legal questions more clearly than the lawyers I'm paying by the hour.

The lawyers still matter. Their value right now is being a Spanish person who walks into a municipal office and talks to another Spanish person. The human relationship is the product. But the analysis, the research, the interpretation? That transferred the moment I gave the AI proper context.

What happens after the collapse

Fredrik barely writes code anymore. His entire day is decisions. The AI presents options, asks which direction, waits for a clear answer. He described it as the moment when non-decisions become impossible. Before, you could defer, delay, give a vague answer in a meeting and get away with it. Now the system needs an actual input and it will sit there, politely and infinitely, until it gets one.

He built his professional identity over fifteen years. Starting as a terrified junior consultant at a Stockholm IT firm, coached to fake knowledge he didn't have, working through imposter syndrome into genuine mastery. That craft is no longer the scarce resource. His knowledge is. His judgment is. His understanding of which decisions matter and which are noise. Everything that can't be copied in an evening by a developer and an AI with the right context.

Every company will save money with AI. Every company will get faster. Fredrik put it simply: that's not a competitive advantage if everyone does it.

The question is what you build with the time and capability you just freed up. What becomes possible now that wasn't before? What value can you create that didn't exist when humans were spending weeks on dark mode?

Engineers found out first because they'd spent forty years, entirely by accident, building the context infrastructure that made the answer visible. The rest of us will need to build it on purpose. The ones who start now will have their dark-mode-in-an-evening moment. The ones who wait will keep wondering why the AI feels like a disappointing intern.

It isn't the AI. It never was.